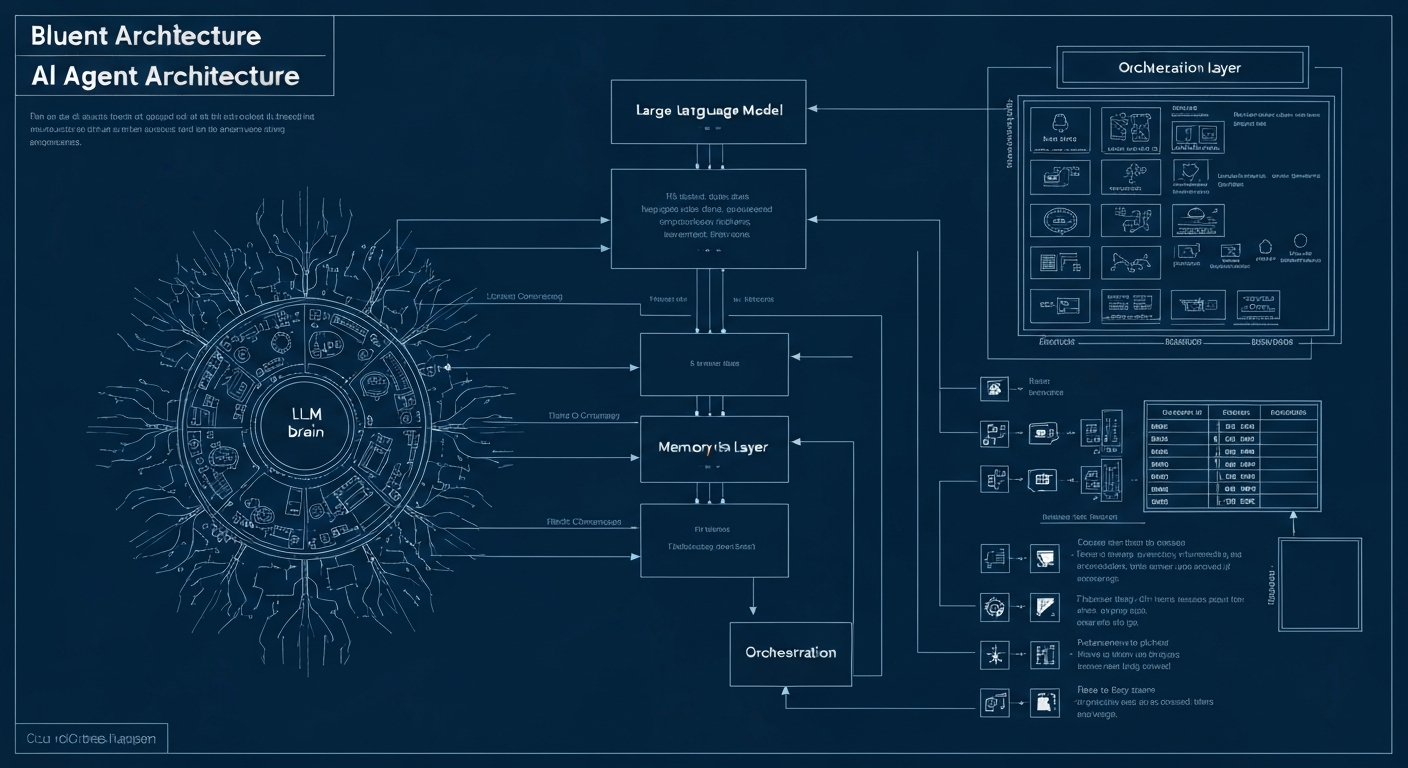

Understanding AI agents at the architecture level helps you make better decisions about implementation, vendor selection and customization. This article explains the four fundamental building blocks of modern AI agents: the LLM as reasoning engine, tools for action, memory for context and orchestration for complex workflows.

Building Block 1: The LLM (Large Language Model)

The LLM is the brain of an AI agent. It processes text, understands instructions, reasons about problems and determines what actions to take. Popular LLMs used as agent cores include GPT-4o (OpenAI), Claude 3.5 Sonnet (Anthropic) and Gemini 1.5 Pro (Google).

Building Block 2: Tools

Tools give an AI agent the ability to act in the real world. Without tools, an LLM is just a text generator. With tools, an agent can send emails, query databases, call APIs, read and write files, scrape web pages and control external services.

Building Block 3: Memory

Memory enables an AI agent to remember context and learn. Short-term memory is the context window; long-term memory uses vector databases like Pinecone for persistent storage across sessions.

Building Block 4: Orchestration

Orchestration determines how an AI agent handles complex, multi-step tasks. The ReAct loop (Reason + Act + Observe) and multi-agent orchestration are the dominant patterns. Match-AI's AI-agent uses multi-agent orchestration where a central orchestrator coordinates specialist agents.